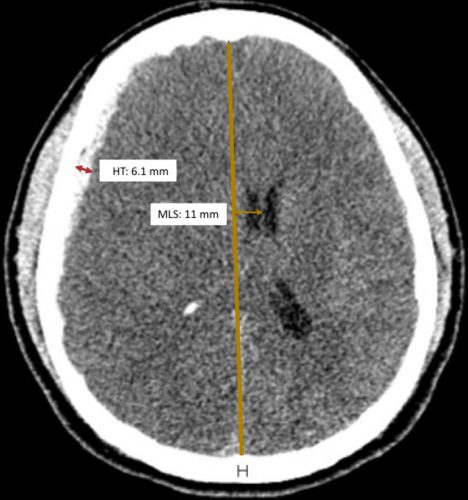

Here’s something you may not have heard of before: the Zumkeller index. Most trauma professionals who take care of serious head trauma have already recognized the importance of quantifying extra-axial hematoma thickness (HT) and midline shift (MLS) of the brain. Here’s a picture to illustrate the concept:

Source: Trauma Surgery Acute Care Open

Zumkeller and colleagues first described the use of the mathematical difference between these two values in prognosticating outcomes in severe TBI in 1996.

Zumkeller Index (ZI) = Midline shift (MDI) – Hematoma thickness (HT)

Intuitively, we’ve been using this all along. At some point, we recognized that if the degree of midline shift exceeds the hematoma thickness, it’s a bad sign. The easiest way to explain this is that there is injury to the brain that is causing swelling so the shift is greater than the size of the hematoma.

The authors of a recent paper from Brazil decided to quantify the prognostic value of the ZI by doing a post-hoc analysis of a previously completed prospective study. They limited their study to adult patients with an acute traumatic subdural hematoma confirmed by CT scan. It used data from the 4-year period from 2012-2015.

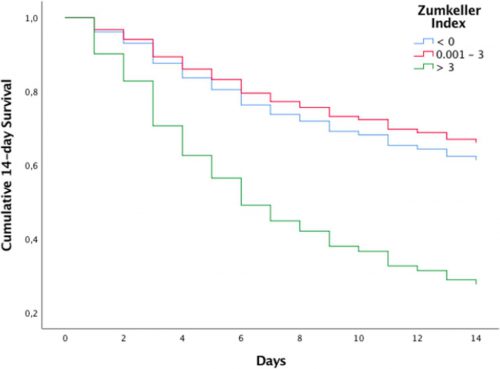

They compared demographics and outcomes in three cohorts of ZI:

- Zero or negative ZI, meaning that the midline shift was less than the size of the hematoma

- ZI from 0.1 mm to 3.0 mm

- ZI > 3.0 mm

And here are the factoids:’

- A total of 114 patients were studied, and the mechanism of injury was about 50:50 from motor vehicle crashes vs falls

- About two thirds were classified as severe and the others were mild to moderate, based on GCS

- Median initial GCS decreased from 6 in the low ZI group to 3 in the highest ZI group, implying that injuries were worse in the highest ZI group

- Mortality (14-day) was 91% in the highest ZI group and only in the low 30% range in the others

- Regression analysis showed that patients with ZI > 3 had an 8x chance of dying within 14 days compared to the others

Source: Trauma Surgery Acute Care Open

Bottom line: This study confirms and quantifies something that many of us have been unconsciously using all along. Of course there are some possible confounding factors that were not quantified in this study. Patients with the more severe injuries tended to also have subarachnoid hemorrhage and/or intra-ventricular blood. Both are predictors of worse prognosis. But this is a nice study that quantifies our subjective impressions.

The Zumkeller Index is an easily applied tool using the measuring tool of your PACS application. It can be used to determine how aggressively to treat your patient, and may help the neurosurgeons decide who should receive a decompressive craniectomy and how soon.

So now go out and amaze your friends! You’ll be the life of the party!

Reference: Mismatch between midline shift and hematoma thickness as a prognostic factor of mortality in patients sustaining acute subdural hematomaTrauma Surgery & Acute Care Open 2021;6:e000707. doi: 10.1136/tsaco-2021-000707